- TheTechOasis

- Posts

- Microsoft Leads Us Into the World's of LMMs and MultiAgents

Microsoft Leads Us Into the World's of LMMs and MultiAgents

🏝 TheTechOasis 🏝

Breaking down the most advanced AI systems in the world to prepare you for your future.

5-minute weekly reads.

TLDR:

AI Research of the Week: Microsoft presents LLaVa 1.5, a new open-source paradigm

Leaders: Everyone will Manage Teams in the future, only most of the team members won’t be human; The MultiAgent Paradigm is now a reality

🤯 AI Research of the week 🤯

I’ve talked about the AI war between open-source and private models many times, and always the outcome seems to be the same.

Open-source seems great and full of promises, but is often simply wishful thinking and impracticality.

But now things might have changed.

Microsoft, alongside the Universities of Wisconsin-Madison and Columbia, has presented the newest version of the LLaVa model, LLaVa 1.5.

LLaVa, one of the first truly performant Large Multimodal Models (LMMs), has been upgraded and the results are highly impressive, considering it is orders of magnitude, and I mean hundreds of times, smaller than models like GPT-4 Vision, OpenAI’s newest release that is taking the world by storm.

The recently released paper not only gives us huge insight on how state-of-the-art multimodal models are built, but also manages to prove the entire industry wrong.

Yes, everybody was wrong about open-source, including myself.

Oh my Sweet, Sweet Grafting

First and foremost, we must clarify what multimodality is, as this word gets thrown around aimlessly.

What really is multimodality

In a strict and concise form, multimodality represents the capacity of a model to process at least two different modalities, a modality being a type of input data (words, images, sounds, et al).

However, this definition can easily be fooled. If I get a speech-to-text model and an LLM, I can easily create a solution that processes both speech and text.

But does that make my solution multimodal?

Yes, but the models used certainly aren’t multimodal.

A great example of this is ChatGPT. In its new GPT-4V version, ChatGPT is image & text multimodal, but it also allows you to process and synthesize speech. Thus, the solution processes three types of input (text, images, and speech) but the model in reality is only multimodal for text and images.

Don’t get fooled by User Interfaces!!

In its purest form, true multimodality occurs when a model’s different modality components share a common embedding space.

In other words, taking a visual and text model like LLaVa, it happens when the visual and text components encode the input into the same high-dimensional vectorial space.

Embedding space: As machines only understand numbers, all input, be that text, images, or spectrograms, is transformed into a dense vector, dense because it incorporates key semantic information about the underlying data.

In this space, similar concepts have similar vectors, signaling the model that the closer two vectors are, the similar the underlying data they represent is.

This is how machines understand our world. It’s all based on relatedness, as OpenAI describes it.

Therefore, if an image of the Taj Mahal and its Wikipedia text description share very similar vectors in this embedding space, we have achieved multimodality, as the model understands the salient objects (the Taj Mahal in this case) of both modalities as the same ‘thing’, just like a human would.

But how did the researchers achieve this with LLaVa 1.5?

Grafting, a cost-effective and elegant method

There are three ways to achieve multimodality:

Tool/model-based methods: By combining different models or tools you can allow your solution to handle multiple inputs. While the solution is multimodal, the underlying models aren’t.

Grafting: Implies using pre-trained image encoders and LLMs and projecting the encoder’s vector embedding into the LLM’s latent space using a projecting matrix or an MLP layer.

Generalist systems: Most probably how GPT-4V was trained, training an image encoder and an LLM from scratch into the same embedding space. Here, all weights are trained from scratch.

LLaVa was trained using grafting, which is awesome for one key reason:

The image encoder and the LLM’s weights remain frozen, and we simply train the projecting matrix to learn to transform the encoder’s vectors, or ‘grafting’ them, into the LLM’s high-dimensional space.

Source: Google

As you can see above, when an image is sent to the image encoder (a CLIP encoder in LLaVa’s case), it processes it, and then it goes through an ‘adapter’ which in reality is simply a matrix that transforms the output vector of the image encoder into an acceptable vector that the LLM (Vicuna in LLaVa’s case) understands.

After, the model gets fine-tuned using a visual instruction dataset to create a chatbot that will dutifully answer questions regarding images and text.

In a way, you can think about this adapter as a ‘language translator’ that would help an English woman talk with a German woman.

Grafting not only works great, as LLaVa will prove in a second, but also it makes the whole process very cost-effective, as you only have to learn the weights of the projection matrix, the rest of the components remaining frozen in their pre-trained form.

If you’re sitting on top of a hundred million dollars, you can go with the third option and train your multimodal model from scratch.

Probably the best option in terms of performance, but with a very hard-to-justify business case unless you’re the next trillion-dollar company like OpenAI with a 2.4 trillion-dollar at the time of writing Big Tech company funding your efforts.

And what are LLaVa’s results?

Another day, another SOTA

The results obtained from LLaVA 1.5, especially the 13B model, are impressive.

Out of the 12 most popular multimodal benchmarks, LLaVa 1.5 is the new state-of-the-art (SOTA) for 11 out of the 12, beating other popular open-source models by a landslide.

Disclaimer: No, it’s not at GPT-4V’s level in absolute terms, obviously, but relative to its size, it is.

But seeing a model beat others in AI has become business as usual these days.

However, with LLaVa 1.5 things have taken a turn for the unexpected.

LLaVa-1.5 manages to beat all other models, models that use the same base LLM, by using orders of magnitude less data and computational effort, in some cases requiring x2,500 times less data for pre-training ('PT’ column), and 75 times less (‘IT’ column) in the fine-tuning stage.

In LLaVa’s case, they didn’t use a projection matrix but a two-layer MultiLayer Perceptron (MLP) that performed the transformation.

Consequently, LLaVa-1.5 was trained, literally, in a fricking day with an 8-A100 NVIDIA GPU node for a total of 26 hours.

Ladies and gents, open-source has just become affordable, finally.

Therefore, training high-performant models has finally become attainable for enterprises, that could leverage their data to train open-source models that make them stand above the rest.

In a world driven by money, not everything is about having the best model, sometimes you just need to make it viable.

But, incredibly, LLaVa-1.5 just happens to be both in the world of open-source LMMs.

🫡 Key contributions 🫡

LLaVa-1.5 models become the first open-source LMMs that are high-performant while extremely efficient to train

The paper gives us great insights into how these models are trained, especially regarding the super popular Grafting method

🔮 Practical implications 🔮

Tech-savvy enterprises can use LLaVa and similar models to fine-tune them with their data and create top models for their niche

For the first time, private models don’t seem to be the ‘only way’ for companies anymore

👾 Best news of the week 👾

😍 The State of AI Report for 2023

💸 Cleanlab raises $30 million to build trustworthy AI systems

🎆 Meta will now allow you to chat with your favorite celebrity

🎙 ElevenLabs introduces ‘AI Dubbing’ to translate your voice to 20 languages

🤩 Here’s the complete Adobe AI presentation with mind-blowing tools

🥇 Leaders 🥇

The MultiAgent Paradigm: Taking AI to a New Dimension

One of the reasons the cloud computing business became so important to many companies is that it democratized a crucial feature in application serving, on-demand horizontal scaling.

Instead of having to continuously upgrade your servers to more powerful ones, to handle increasing requests and, more importantly, peaks and valleys, the cloud offered virtualization features that allowed the deployment of new servers on demand.

Yes, the cloud is simply deploying your apps in easy-to-manage third-party servers. That’s it.

Still, it’s a $500 billion market in 2023 set to be a trillion-dollar one by 2028.

Cloud computing is proof of the power in numbers, and that you don’t need to always have the best server in the world, many times is simply having the capacity to have many at will.

But what does this have to do with AI?

The Two Great Problems

With AI, especially in the world of Large Language Models (LLMs) and Large Multimodal Models (LMMs), most innovation has been through vertical scaling.

Put simply, making the models better and better.

However, no matter how increasingly better the models become, there seem to be two situations where models fail catastrophically:

The moment you put them in out-of-distribution situations — with data that is different from the training data.

The moment the model receives a text sequence that is larger than the context window it has been trained on.

As for the former, although these models are usually foundation models, meaning they can generalize to unseen data, when this data is too different from what they have seen in training, they will still fail.

For example, with Retrieval Augmented Generation (RAG) you can send the model context about dogs and still work fine. The model has seen millions of texts about dogs, so it generalizes correctly.

On the other hand, if you send it information about a new species of animal not seen before, this is out-of-distribution data, data that is nothing like anything it has seen before. In these cases, the chances of error are huge even for the most advanced models.

As for the latter, this has a more technical explanation.

Transformer-based models (basically all general-purpose models today) are based on the attention mechanism, where words ‘talk’ to each other to understand the relationships between them and capture the meaning of text.

The training of these models is done using fixed-size context windows, meaning that any text that the model handles during training never exceeds a certain size.

This is done because computational costs are quadratic to sequence length. If you double the text sequence length, the cost quadruples.

Consequently, models become limited by the amount of text they are ‘used’ to seeing, so the moment you increase that size, they aren’t capable of extrapolating to new sizes, and perplexity falls off a cliff.

Perplexity is the main metric to measure an LLM’s prediction accuracy. It tells us how confident the model is regarding a certain prediction. Bottom line, the less ‘perplexed’ the model is, the better.

As we still haven’t really figured out these two issues, even for cases like GPT-4, one has to ask itself:

Is getting bigger and more expensive models the only way to improve results, or is there another way?

And as cloud computing taught us, there is another option.

In this case, multiagent applications.

The potential? Huge. The promises? Many.

A Group of LLMs Walk into a Bar

You’ve heard about prompt engineering, right?

That super cool new AI job that pays 6 figures in the US that might as well die in the next few months as machines are already better than us at it.

But even though it probably won’t be your next job, it’s crucial in the context of sequence-to-sequence models like ChatGPT.

Debate and Conquer

In AI, input matters.

In Generative AI, input matters a lot.

Put bluntly, if your input to ChatGPT is garbage, ChatGPT will give you garbage back.

Consequently, academia has studied different ways to improve model performance by getting better at communicating with them.

And even though things like Chain-of-Thought (the famous ‘go step by step’) help us communicate on a 1:1 basis with models, researchers have realized that the best results are obtained when combining multiple agents.

In MIT’s Society of Mind paper, researchers found that sending a question to a group of agents who could then discuss the best answer worked best.

In some cases, even when both agents were initially wrong, by debating they managed to reach the correct answer.

This example wasn’t luck, as researchers observed that the ‘debate’ approach worked best most of the time, having a considerable improvement in accuracy with arithmetics, or chess.

Seeing this, many took notice.

MultiAgent Simulations

A few months later, several new papers came out that took this feature to a whole new perspective.

Researchers at Stanford introduced 25 ChatGPT-based AIs into a simulation to study emergent behaviors.

These AIs made friends, and even invited other AIs to a Valentine's party!

But things didn’t stop there.

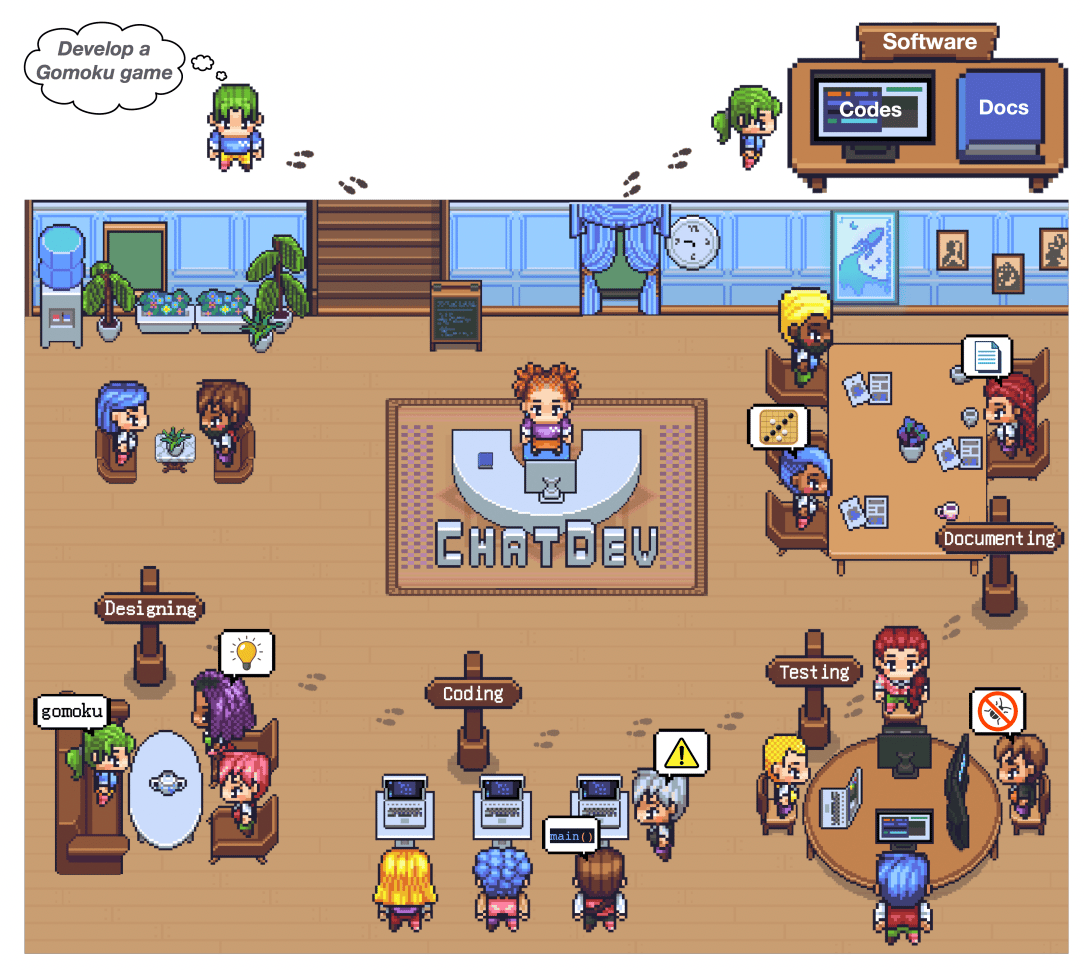

Another collaboration between American and Chinese researchers created ChatDev, a team of AI agents, each with their own roles and tasks, assembling a fully functional developer team.

Design, coding, testing, and document teams worked for the executive team (CEO et al) to develop games on demand.

This paper not only took collaboration into tangible, playable games, but defined interesting features like:

The teams followed a Waterfall methodology

Communication hierarchies

Overall, this fully autonomous team was capable of delivering fully-documented, fully-functioning games in just over 7 minutes with a cost of around a US dollar.

See the pattern?

LLMs/LMMs excel when they collaborate with each other, and as proven by research like Liang et al and others, because:

It encourages divergent thinking

It improves factuality and reasoning

It improves validation procedures

Overall, AI applications that involve multiple LLMs are simply and outright superior to standalone LLM apps, period.

But building these apps is really, really hard… until now.

Microsoft has made it a lot easier to build the multiagent applications that will transform our lives in various ways:

Soon, knowledge workers will have at their disposal entire AI teams that are tailored to their needs and are customizable and adaptable

Citizen developers, people who don’t know how to code, will either way be capable of building fully-fledged applications in no time

Industries like coding, mathematics, or even gaming will transcend into completely new ways of building value

And, amazingly, Microsoft has just made it a lot easier to bring this vision into fruition and quicker than most would have expected, to the point that I’m convinced that entire business will be built upon this principle really soon.

So, how do we build the apps of the future?

Subscribe to Full Premium package to read the rest.

Become a paying subscriber of Full Premium package to get access to this post and other subscriber-only content.

Already a paying subscriber? Sign In.

A subscription gets you:

- • NO ADS

- • An additional insights email on Tuesdays

- • Gain access to TheWhiteBox's knowledge base to access four times more content than the free version on markets, cutting-edge research, company deep dives, AI engineering tips, & more